AI Consulting

Anthropic CEO Dario Amodei published a 20,000-word essay in January 2026 exploring what he called the adolescence of technology — the gap between what AI can do and what organisations are actually using it for. The gap is real. And according to the most recent enterprise adoption data, 79% of organisations reported challenges deploying AI effectively, even as the technology itself reached new capability heights.

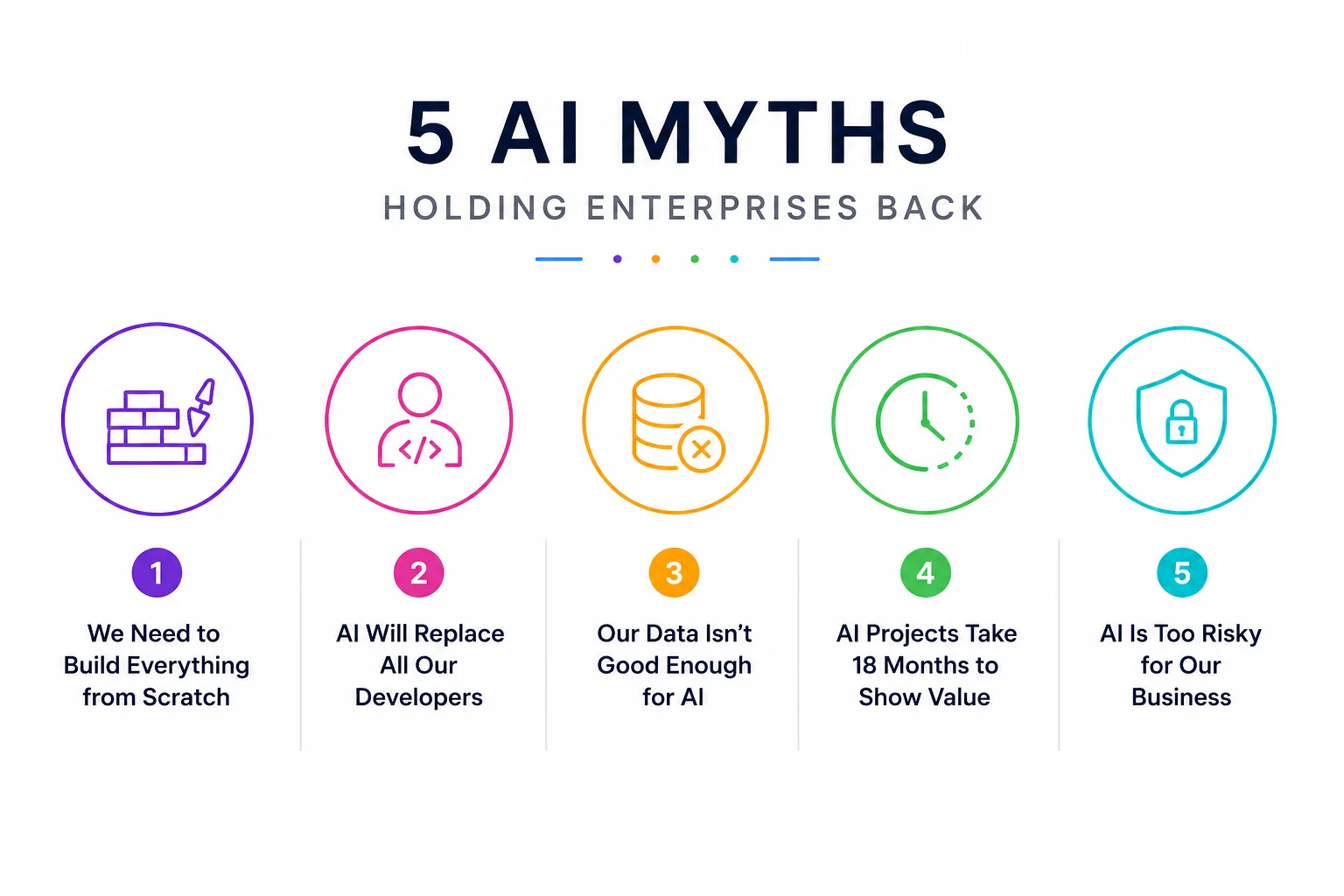

The culprit, more often than not, is not the technology. It is the myths that drive the decisions made before the technology is ever turned on. Five of these myths are doing significant damage to Indian enterprises right now — costing time, budget, and competitive position. Here they are, with the data and the reality that should replace them.

The belief: AI is so specialised and our business is so unique that we need to develop proprietary models, train them on our data, and build a custom technology stack from the ground up. Anything less is a half-measure that won't work in our context.

The reality: approximately 80% of enterprise AI value comes from integration, configuration, and deployment of existing foundation models — not from building new ones. The companies achieving the most measurable AI ROI in 2026 are not the ones that trained their own models. They are the ones that connected Claude, Gemini, or GPT to their existing systems quickly, focused on a specific use case, and iterated from there.

Custom model development — fine-tuning a foundation model on your proprietary data — has its place, but it belongs in the 20% of cases where the generic model genuinely doesn't perform well enough on your specific task. An invoice processing workflow, a customer support classifier, a contract review tool: none of these require a custom model. They require a well-designed prompt, a clean integration, and the right guardrails.

The "build from scratch" myth costs Indian enterprises months of delay and millions of rupees in unnecessary infrastructure investment before they've validated whether the use case even works. The right approach: build a focused AI application on a foundation model, validate the business value, then invest in customisation where it demonstrably adds value.

The belief: AI coding tools are so capable that software engineers are becoming obsolete. We should stop hiring developers and wait for AI to do their jobs.

The reality: skilled engineers are scarcer than ever, not more plentiful. AI coding tools — Claude Code, GitHub Copilot, Cursor — have dramatically increased what a single developer can build. They haven't decreased the need for developers who know what to build, how to design systems that scale, and how to review AI-generated code for correctness and security.

Claude Opus 4.7's 64.3% score on SWE-bench Pro — resolving two-thirds of real GitHub issues autonomously — is genuinely remarkable. It is also, by definition, not capable of handling the other third. More importantly, SWE-bench tests whether a model can fix a described bug in isolation. Enterprise software development involves ambiguous requirements, stakeholder alignment, architectural trade-offs, security reviews, deployment risk management, and institutional knowledge about why certain decisions were made two years ago. None of that is in SWE-bench.

What AI coding tools actually do is make good engineers dramatically more productive. An engineer with Claude Code can ship in two days what previously took two weeks. The demand for engineers who can use these tools well — to design agent architectures, review AI-generated code, integrate AI into complex systems — is growing, not shrinking. Enterprises that stop investing in engineering capability because "AI will handle it" will find themselves unable to deploy AI at all, because deployment requires engineers.

The belief: AI requires clean, complete, well-labelled datasets. Our data is messy, inconsistent, and spread across legacy systems. We need to complete a multi-year data transformation project before we can use AI effectively.

The reality: you can start with the data you have. "Perfect data" is a myth — no enterprise has it, and waiting for it means waiting forever. The critical distinction is between AI use cases that require high-quality training data and those that don't.

Foundation models like Claude are already trained. You don't give them your messy data and ask them to learn from it. You give them context — a specific document, a specific record, a specific question — and they reason over it. For retrieval-augmented generation (RAG) applications, document analysis, report generation, and similar use cases, the model works with whatever data you provide in context. It doesn't require that data to be pre-processed into a perfect format.

There are AI use cases that do require good data: training custom classifiers, fine-tuning models for specialised domains, building recommendation systems that learn from historical patterns. If those are your use cases, yes, data quality matters. But for the majority of enterprise AI applications with the fastest ROI, your existing data — however imperfect — is sufficient to start. What you learn from starting will tell you exactly where data quality actually matters, rather than guessing upfront and investing in the wrong places.

The belief: AI initiatives are long, complex transformation projects. Like ERP implementations, they require extensive planning, customisation, and change management before anything works. Expecting results in under a year is unrealistic.

The reality: with AI builders and focused use cases, measurable value in 2–4 weeks is not just achievable — it is the norm for well-designed deployments. The "18-month timeline" comes from the old paradigm of building custom AI systems from scratch, or from enterprises trying to deploy AI everywhere simultaneously rather than focusing on a specific, high-value workflow.

The organisations demonstrating the fastest AI ROI in 2026 share a common pattern: they pick one workflow, they pick the right tool, and they ship something that works. A customer support classification agent that routes tickets correctly. An invoice extraction tool that eliminates manual data entry for 200 invoices per day. A contract review assistant that flags non-standard clauses before the legal team reads the full document. Each of these can be designed, built, tested, and deployed in two to four weeks by a small team.

The 18-month timeline is not a property of AI projects. It is a property of poorly scoped AI projects that try to solve too many problems at once. The antidote is focus: one use case, one team, one clear success metric. Our AI Builder service is designed specifically for this — shipping a working AI application for a focused enterprise use case in days, not months.

The belief: AI hallucinations, bias, and unpredictable behaviour are the primary risks of enterprise AI deployment. The technology is fundamentally unsafe and the risk of deploying it outweighs the benefit.

The reality: the biggest risk in enterprise AI deployments is poor change management — not the model. Across the AI deployments that failed in 2025 and 2026, the dominant failure mode was not "the AI did something dangerous." It was "employees didn't use it, or used it without understanding what it was doing, or the organisation didn't have governance processes that matched how people were actually using it."

Model-level risks — hallucinations, biased outputs, incorrect information — are real and require mitigation. But they are manageable with well-established techniques: retrieval-augmented generation reduces hallucinations by grounding responses in real documents. Human review checkpoints catch errors before they cause consequences. Audit logging provides visibility into what the AI did and why. These are not novel challenges; they are engineering and process challenges with known solutions.

The harder problem — and the one that actually kills most enterprise AI initiatives — is organisational. Employees who don't trust the tool and won't use it. Managers who don't know how to review AI outputs. Governance processes designed for the old way of working that create friction for the new. An IT organisation that blocks AI tools out of caution without providing an approved alternative, so people use unauthorised consumer tools instead.

The enterprises that succeed with AI invest as much in change management and governance as they do in technology. Our strategic consulting team has built AI adoption frameworks specifically for the Indian enterprise context — designed around the organisational and cultural dynamics that determine whether a technically sound AI deployment actually delivers business value.

All five myths share an underlying pattern: they create reasons to delay. Wait until the data is perfect. Wait until AI is proven safe. Wait until the platform strategy is decided. Wait until there's a clear 18-month roadmap.

The enterprises that are pulling ahead in 2026 are not waiting. They are picking focused use cases, shipping quickly, learning from real usage, and iterating. They are treating AI deployment like product development — hypothesis, build, measure, learn — not like infrastructure procurement.

If any of these myths are part of your organisation's current AI conversation, the most valuable thing you can do is test them with a small, real deployment. Build one thing, for one team, in four weeks. Let the results replace the myths.

That is exactly what Infurotech's AI Builder service is designed for. If you want to move from myth to result, start the conversation with our team. We will help you pick the right first use case and build it fast enough that the results speak for themselves.

The AI that Indian enterprises are holding back from is not waiting for them. Their competitors are already using it. Automation is already running in the organisations that moved first. The only thing that myths accomplish is making the catch-up harder.