BFSI

The compliance function at a mid-sized Indian NBFC or private bank looks, from the outside, like a well-staffed team with clear processes. Look more closely and you see what it actually is: a group of skilled people spending the majority of their time on data aggregation, format conversion, and exception triage — work that is necessary, repetitive, and increasingly impossible to sustain at the volume that 2026 regulatory requirements demand.

RBI's reporting requirements have grown substantially in the past three years. SEBI's disclosure timelines have tightened. IRDAI's solvency monitoring cadence has increased. The DPDP Act 2023 has added personal-data audit obligations that did not exist before. Each addition to the compliance workload lands on teams that are already at capacity, producing the outcomes that regulators periodically note in inspection findings: delayed returns, gaps in transaction monitoring coverage, KYC deficiencies in loan files, and AML false positive rates so high that analysts cannot clear the alert queue.

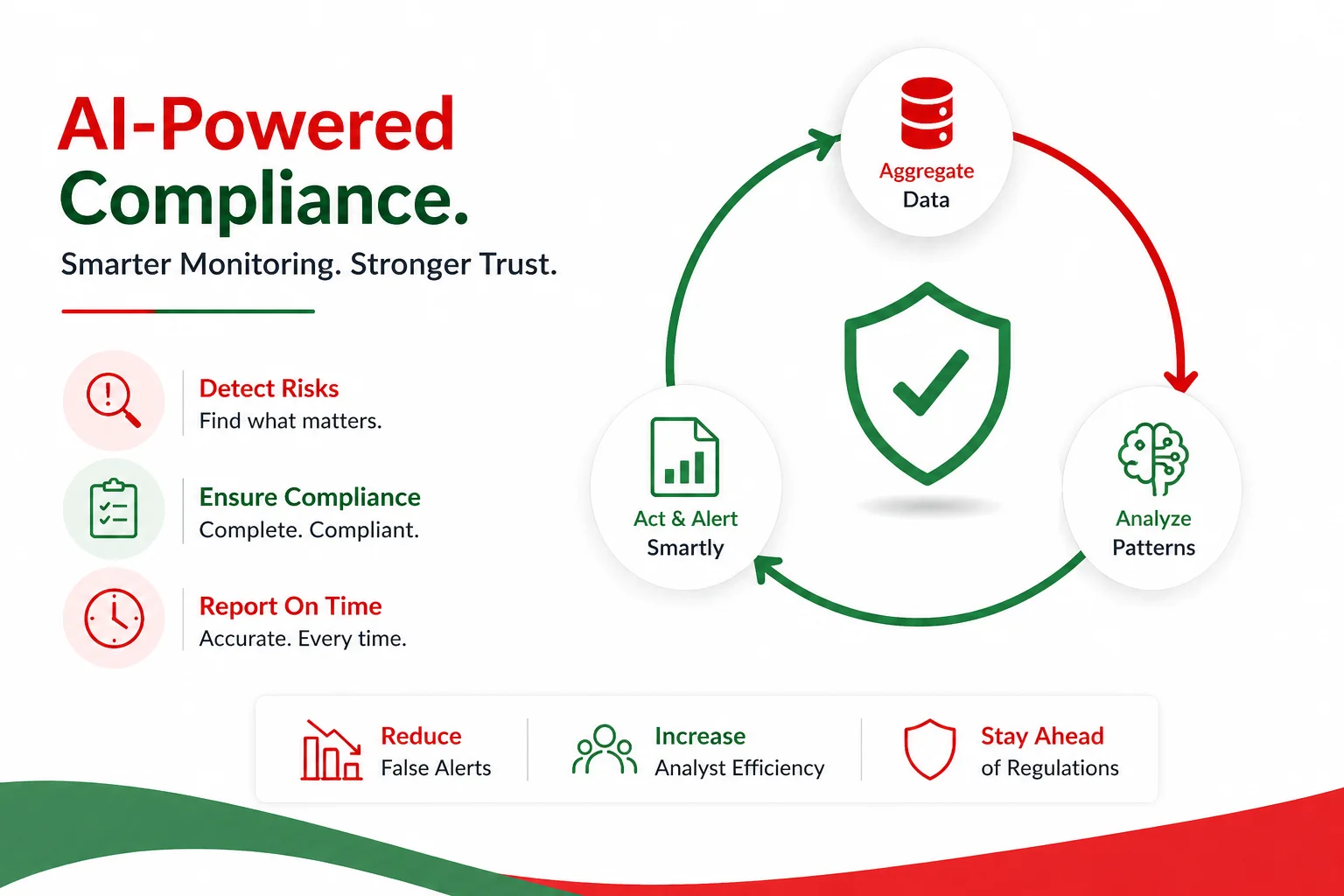

AI compliance monitoring does not solve the problem by replacing compliance officers. It solves it by doing the data aggregation, the pattern matching, and the initial triage — the work that consumes compliance capacity without requiring the judgment and interpretation that compliance professionals are actually trained to provide.

The most consequential failure mode in manual transaction monitoring is not that suspicious transactions go undetected — it is that the false positive rate is so high that analysts cannot get to the genuinely suspicious ones. Most Indian banks and NBFCs operating rules-based transaction monitoring systems report false positive rates above 95%. For every 100 alerts generated, fewer than 5 represent genuine AML concerns worth escalating. The other 95 require analyst time to dismiss.

At transaction volumes of 10,000–50,000 per day — the range for a mid-sized NBFC — a 95% false positive rate means analysts are spending the vast majority of their working time closing alerts that were never real concerns, while the queue grows faster than they can clear it. This is not a staffing problem. It is an architecture problem: rules-based systems generate alerts based on thresholds, and thresholds cannot distinguish between a retired teacher making an unusually large withdrawal for a family event and a money mule moving funds to a collection account.

Regulatory reporting has a different failure mode: completeness and timeliness. RBI returns — BSR reports, Form A returns, SLR and CRR calculations — require aggregating data from multiple source systems, applying the regulatory definitions correctly, and submitting within the required window. When source system data is inconsistent or the aggregation is done manually in spreadsheets, the failure mode is errors that require revised submissions, which attract scrutiny.

Loan documentation completeness is a third failure category. In high-volume lending environments, a meaningful percentage of loan files reach disbursement with documentation gaps — missing KYC documents, credit bureau reports that have expired, collateral valuations that were not refreshed. These gaps are found either at internal audit or at regulatory inspection, at which point the remediation cost is substantially higher than it would have been at origination.

AI-powered transaction monitoring replaces or augments the rules engine with a risk-scoring model that evaluates transactions in the context of customer behaviour, peer group patterns, and relationship history. Instead of flagging every transaction that exceeds a threshold, the model assigns a risk score based on how anomalous the transaction is relative to what is expected for that specific customer.

The practical result is a 60–80% reduction in false positives compared to rules-only systems. Analysts receive a smaller, higher-quality alert queue — one they can actually clear, and where a higher proportion of escalations represent genuine concerns. The model does not decide whether a transaction is suspicious; it decides whether the transaction is unusual enough to warrant analyst review. The judgment call remains with the human.

The important architectural point: the model needs to be explainable. A risk score of 0.87 is not useful to a compliance officer who needs to write a SAR or justify a filing to the regulator. The system needs to surface the specific factors that drove the score — "transaction amount 4.2x customer's 90-day average, destination account opened 8 days ago, second transaction to this account this week" — so the analyst can evaluate the explanation, not just the number.

For structured regulatory returns — RBI's BSR-1, BSR-2, Form A, the CRILC filing for large borrowers, SEBI's shareholding disclosures — the data aggregation and formatting work can be automated end-to-end. The system pulls from source systems (core banking, loan management, treasury), applies the regulatory definitions, and generates the submission-ready file. The compliance officer's role shifts from data aggregation to review and sign-off.

The prerequisite is clean data lineage from source systems to the report — knowing exactly which field in the core banking system maps to which line in the RBI return, and having automated checks that catch data inconsistencies before they propagate into the submission. This is integration and data architecture work as much as AI work.

At the point of loan origination, an AI agent can check the loan file against the required document checklist, verify that each document meets the validity requirements (KYC documents not expired, credit bureau report dated within 90 days, collateral valuation within the required window), and flag gaps before disbursement rather than at audit. This is a straightforward workflow automation — the intelligence is in the rules about what constitutes a complete file, not in complex pattern recognition.

The value is in the timing: finding a documentation gap before disbursement takes 10 minutes to resolve. Finding it at internal audit takes a compliance officer two days of file retrieval and correspondence. Finding it at a regulatory inspection triggers a finding that requires a formal response and remediation plan.

Exposure limits, concentration risk limits, sectoral limits, and individual borrower limits need continuous monitoring as the loan book and investment portfolio change through the day. Manual limit monitoring — typically a daily or weekly report — means limit breaches are discovered after the fact. Automated real-time monitoring flags approaching breaches before they occur, giving the business time to act rather than to explain.

The RBI's framework for digital lending and AI in financial services has three requirements that directly affect compliance monitoring system design: explainability, model risk management, and audit trails.

Explainability means the system must be able to explain why a decision was made or why a flag was raised — in terms that a regulator can evaluate. Black-box models that produce outputs without traceable reasoning fail this requirement. The architecture implication: use explainable AI techniques (SHAP values, attention weights, rule-extraction from model outputs) so that every alert and every automated decision has a documented rationale.

Model risk management means the AI models powering compliance functions need the same governance as other models used in credit or risk decisions: validation before deployment, ongoing performance monitoring, periodic revalidation, and documentation of the validation process. The model used for AML risk scoring needs a model risk management file the same as the model used for credit scoring.

Audit trails mean every action taken by an AI compliance system — every alert generated, every document checked, every report produced — needs to be logged with a timestamp, the data inputs, and the output. This is not optional for DPDP 2023 compliance either: any AI system processing personal data needs to be able to demonstrate, on demand, what processing was done on whose data and on what basis.

The deployments that are working share common characteristics: they are focused on a specific, well-defined compliance problem rather than attempting broad compliance automation; they have strong data foundations (clean source system data and reliable API access); and they are built with human-in-the-loop checkpoints at high-stakes decision points.

Mid-sized NBFCs are finding the most immediate value in loan documentation completeness automation — the problem is well-defined, the data is available, the false positive rate is low, and the cost savings are visible within weeks of deployment. AML transaction monitoring augmentation is the most common second deployment, typically after the data infrastructure work needed for documentation automation has been completed.

Private sector banks are deploying more broadly: transaction monitoring, regulatory reporting automation, and limit monitoring in parallel, typically as part of a broader compliance technology modernisation programme rather than as point solutions.

Fintechs, particularly those operating under RBI's account aggregator framework or as payment aggregators, are deploying real-time reporting automation first — the regulatory reporting cadence for these licence types is high and the penalty for late or inaccurate filing is significant.

The build-buy-integrate decision depends on the size and complexity of the compliance function and the firm's technology capability.

For large private banks with significant technology teams, building on top of an AI platform (using models via API, building the integration layer in-house) gives the best long-term control and the ability to customise for institution-specific risk profiles. The build approach requires 12–18 months for a meaningful first deployment and ongoing model governance capability.

For mid-sized NBFCs and smaller banks, buying a compliance technology platform and customising it for Indian regulatory requirements is typically faster and lower-risk than a full build. The risk is vendor dependency and the gap between what the vendor's standard product covers and what Indian-specific regulations require — which is often significant.

The integrate approach — connecting AI capabilities to the existing compliance technology stack rather than replacing it — is often the most practical for organisations that have existing compliance systems and need to augment rather than replace. This is particularly relevant for transaction monitoring, where existing rules engines can be augmented with AI risk scoring without replacing the surveillance platform.

Our automation practice works with BFSI clients on compliance monitoring deployments that are designed from the start to meet RBI's explainability and audit trail requirements. We do not deploy black-box models for compliance decisions — every system we build includes documented rationale for every automated output.

Our industry solutions team brings specific knowledge of the RBI, SEBI, and IRDAI regulatory frameworks that govern AI use in financial services — reducing the compliance-of-the-compliance-system risk that firms face when deploying AI in regulated functions.

For firms at the strategy stage — deciding which compliance functions to automate first, what technology architecture to use, and how to build the model governance framework — our strategic consulting team provides the regulatory and technology assessment before any build decision is made.

If your compliance team is spending more time on data aggregation than on judgment and analysis, that is a signal worth acting on. Talk to our team about where AI compliance monitoring can close the gap — and what it realistically takes to deploy it in a way that satisfies your regulators.