AI Consulting

Enterprise decisions about AI are being made on outdated information. Not because decision-makers are uninformed — because the field moves faster than conventional wisdom updates. The myths that circulated in 2024 about AI limitations are still driving procurement hesitation, architecture decisions, and change management approaches in Indian enterprises in 2026, despite the fact that many of them have been definitively overturned by what has shipped in the past twelve months.

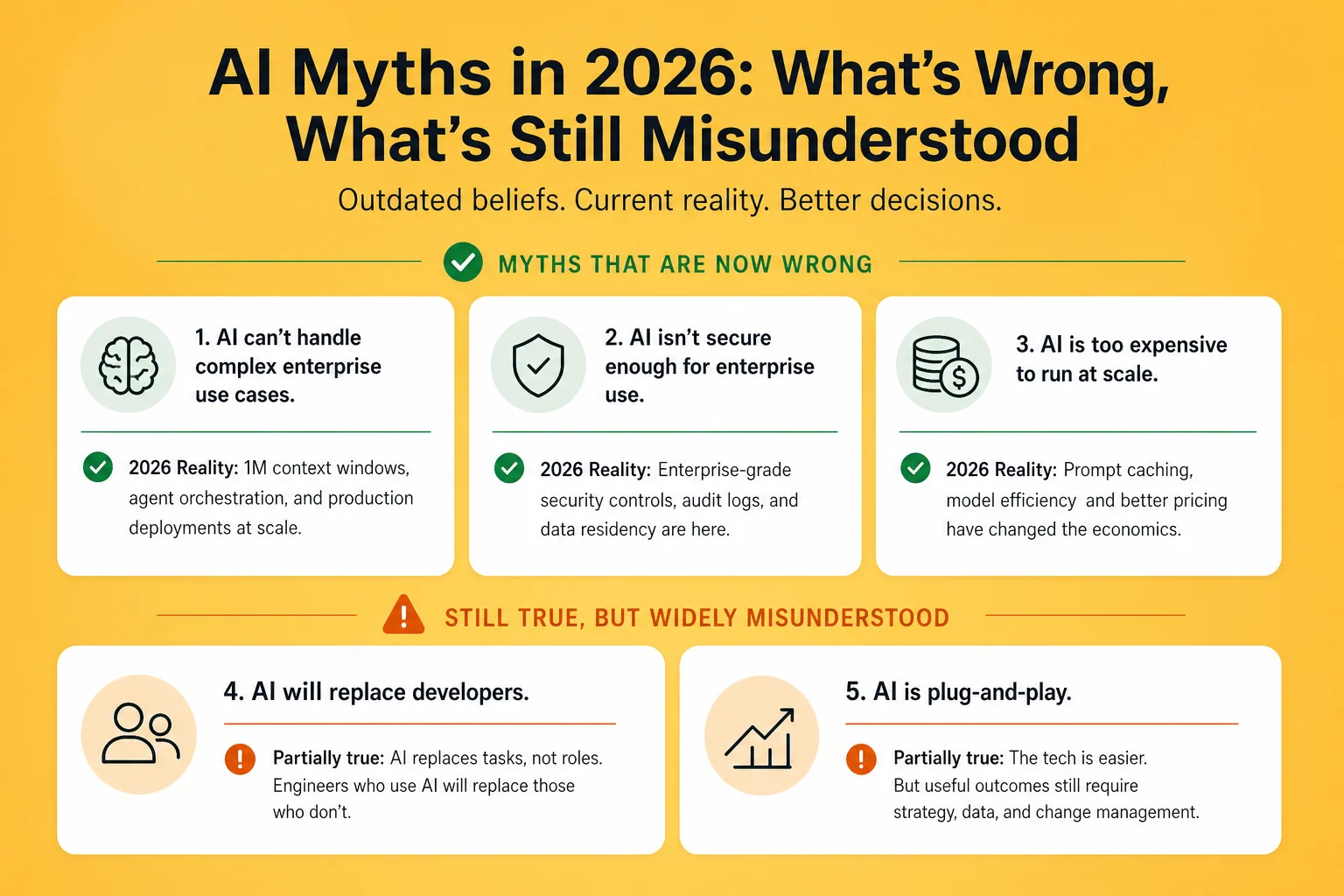

What follows is a myth-busting exercise grounded in 2026 evidence: three beliefs that were reasonable in 2024 but are now demonstrably wrong, and two that are still partially true but widely misunderstood in ways that lead to bad decisions.

The myth: AI models make things up. They confabulate citations, invent facts, produce plausible-sounding but incorrect information. This fundamental unreliability makes them unsuitable for any enterprise use case where accuracy matters.

The 2026 reality: Hallucination is a solvable engineering problem, not a fundamental property of AI systems. The two techniques that have made it effectively manageable at enterprise scale are retrieval-augmented generation (RAG) and long-context grounding.

With RAG, the model does not rely on its training data to answer questions about your business. It retrieves the relevant documents, reads them, and answers based on what it reads — just like a human analyst would. The model cannot hallucinate facts that are not in the retrieved documents; if the document does not say it, Claude will not say it either. Properly implemented RAG reduces factual errors in enterprise AI applications to a level comparable with human error rates on the same tasks.

The 1M token context window (now generally available) provides a complementary approach: load the entire relevant document set into context and have Claude reason over the actual source material. For a contract review, load the entire contract library. For a financial analysis, load the complete dataset. When the model is reasoning over the real data rather than its training memory, the hallucination problem largely disappears.

The myth was reasonable when context windows were 8,000 tokens and RAG was a complex research technique. It is not reasonable in 2026, when both are production-grade enterprise engineering patterns.

The myth: A 1M token context window is always better than a 200K window, which is always better than a 32K window. More context is always better.

The 2026 reality: Context window size and reasoning quality are different properties of a model, and conflating them leads to poor architecture decisions.

Loading 900,000 tokens into a 1M context window and asking a precise question about a specific detail is less effective than loading the 50,000 relevant tokens and asking the same question. The model has finite "attention" — its ability to focus on the most relevant information degrades as context length increases, particularly for specific lookups in very long documents. The right mental model is not "bigger window = smarter" — it is "bigger window = more flexible," useful when you genuinely need all that context, counterproductive when you load it because you can.

The practical implication for enterprise AI architecture: use large context windows for tasks that genuinely benefit from complete context (full codebase analysis, cross-document consistency checks, multi-year trend analysis). Use focused, well-curated smaller contexts for specific question-answering tasks, even if you have the tokens to load more. Quality of context matters as much as quantity.

The myth: AI generates code that looks correct but contains subtle bugs, security vulnerabilities, and logic errors. It cannot be trusted in production systems — especially for anything involving security or financial data.

The 2026 reality: Claude Code with human review and automated testing is now standard engineering practice at Fortune 500 companies. The question is not "can AI-generated code be trusted?" — it is "what is the right review process for AI-generated code?"

Claude Opus 4.7 scores 64.3% on SWE-bench Pro — meaning nearly two-thirds of real-world GitHub issues are resolved correctly without human intervention. Claude Sonnet 4.6 scores 70.3% on SWE-bench Verified. These are not toy benchmarks; they test whether the model can read a real bug report, find the relevant code, and produce a correct fix.

The appropriate response to these numbers is not blind trust — it is calibrated trust with verification. AI-generated code should be reviewed by a human engineer, the same way a junior engineer's code is reviewed by a senior. AI-generated tests should be reviewed for test quality, not just test pass rate. The code review process for AI-generated output should be tuned to the areas where AI makes characteristic mistakes (subtle off-by-one errors, incorrect edge case assumptions, security-adjacent code like authentication and cryptography) rather than treating all AI output with blanket suspicion.

Teams that have adopted this calibrated approach are shipping faster with comparable or better defect rates compared to their pre-AI baselines. The myth is a false binary: it is not "trust completely" or "trust nothing." It is "trust and verify, where the verification is proportionate to the risk."

The partial truth: Llama 4 Scout and Maverick brought open-source model performance to GPT-4o and Gemini Flash parity on broad benchmarks in April 2026. For many tasks — content generation, summarisation, classification at scale — the gap between frontier and open-source has genuinely narrowed.

Where the gap remains large: Agentic reasoning, complex multi-step tool use, and tasks requiring sustained planning across many steps. Claude Opus 4.7's 64.3% on SWE-bench Pro versus Llama 4 Maverick's 38% on the same benchmark captures the gap precisely. For workflows where the model needs to maintain a plan across many steps, recover from unexpected intermediate results, and make judgment calls about which tools to use in what order, frontier models are substantially more capable.

The practical decision framework: open-source for high-volume, single-turn tasks where cost and data sovereignty matter and performance requirements are met by the available benchmarks. Frontier for agentic tasks, complex reasoning, and production applications where errors are costly and the model's judgment quality directly affects business outcomes.

The partial truth: Scaling laws do have limits. You cannot simply add more compute and data indefinitely and expect linear capability improvements. Architectural innovations are required, and they are harder to achieve than pure scaling.

Where the evidence points differently: The capability trajectory has not plateaued in any measurable way at the frontier. Claude Opus went from 26% on SWE-bench in early 2024 to 64.3% in April 2026 — nearly doubling performance on real-world software engineering in fourteen months. Reasoning capabilities, tool use reliability, and multimodal performance have all improved significantly and consistently.

The practical implication: planning your AI strategy around the assumption that current capabilities are "good enough for what we need and will stay here" is a planning error. The strategy you build for a model at 64% SWE-bench needs to accommodate a model at 80% SWE-bench in 12–18 months. Build for the trajectory, not the current state.

Enterprise AI strategy built on 2024 myths produces 2024 decisions in 2026 conditions — too cautious where the risks have been managed, too confident where real challenges remain. The cost of outdated beliefs shows up as delayed adoption, over-engineered solutions to problems that are already solved, and under-investment in the governance that actually matters.

Infurotech's strategic consulting engagements start with an honest assessment of current AI capabilities and limitations — grounded in what has actually shipped, not in vendor marketing or two-year-old research papers. Our AI Builder service delivers production applications that demonstrate current capability directly. And our automation services show actual results from real enterprise deployments, not benchmarks.

If your organisation's AI strategy is still being shaped by myths about hallucinations or code reliability that were current in 2024, a conversation with our team is worth the hour. Reach out — we will tell you what has changed and what still needs careful handling.