AI Agents

Ninety-seven percent of executives deployed AI agents in the past year. Seventy-nine percent of organisations reported AI adoption challenges in the same period. These two statistics, from the same enterprise AI survey, tell the story of Q1 2026 in a single data point: deploying agents is easy; deploying them well is not.

The "deploying agents" part has been solved by a wave of capable tools — Claude Cowork, Claude Code Remote Control, OpenAI Operator, Google ADK, and a dozen agent frameworks. The "deploying them well" part requires answering a question that most organisations have not systematically addressed: which tasks should your AI agents do autonomously, which should they do with human oversight, and how do you design the governance layer that keeps the difference meaningful?

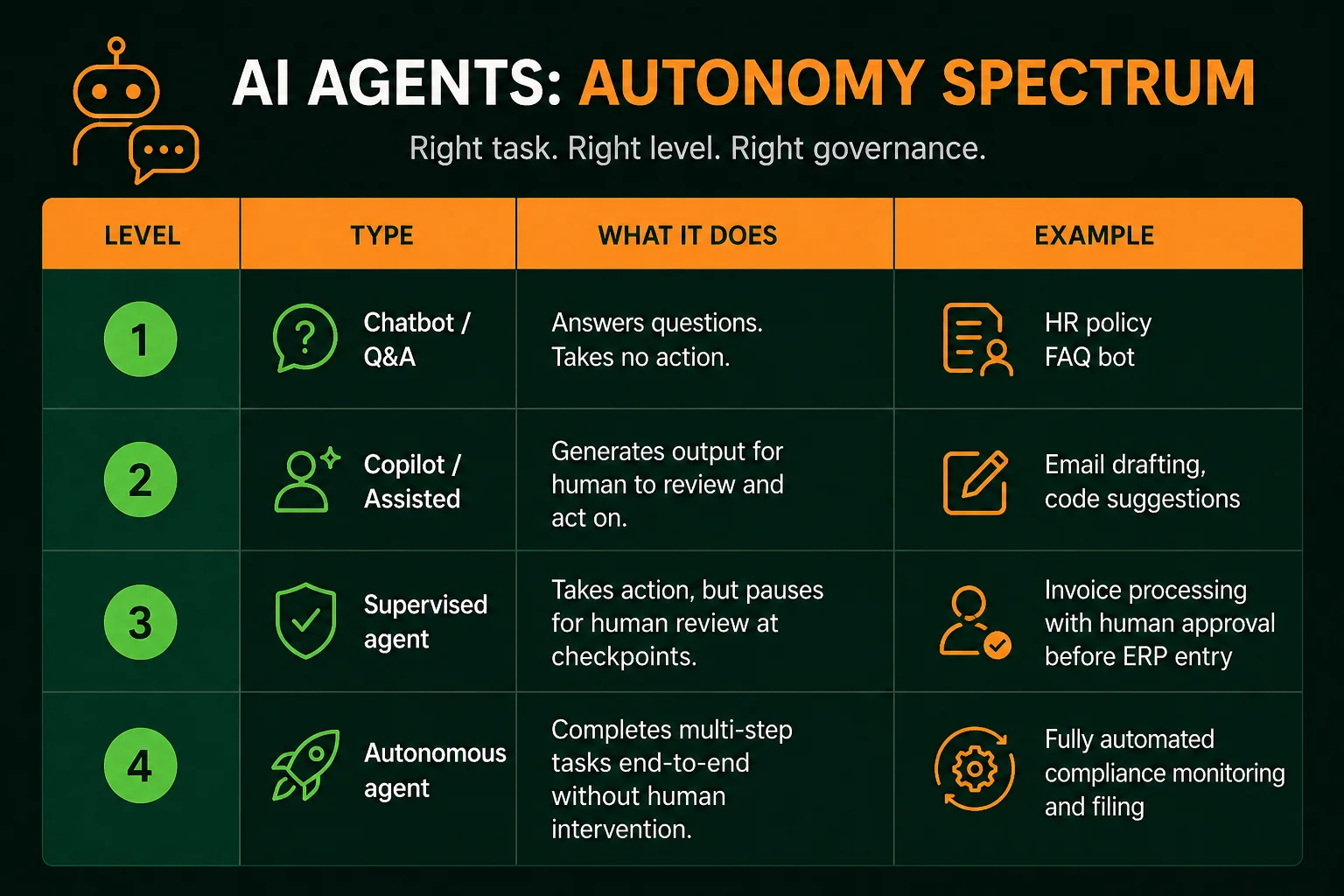

Autonomous and assisted AI are not a binary. They exist on a spectrum, and understanding where different deployment types sit on that spectrum is the foundation of any sensible governance approach.

| Level | Type | What it does | Example |

|---|---|---|---|

| 1 | Chatbot / Q&A | Answers questions. Takes no action. | HR policy FAQ bot |

| 2 | Copilot / Assisted | Generates output for human to review and act on | Email drafting, code suggestions |

| 3 | Supervised agent | Takes action, but pauses for human review at checkpoints | Invoice processing with human approval before ERP entry |

| 4 | Autonomous agent | Completes multi-step tasks end-to-end without human intervention | Fully automated compliance monitoring and filing |

The deployment question is not "should we use autonomous AI?" — it is "what level of autonomy is appropriate for this specific task?" The answer depends on four properties of the task itself, not on a general organisational policy about AI.

A clarification that changes how most governance discussions should proceed: "autonomous" in production enterprise AI is almost never 100%. Even the most autonomous production agent deployments typically have human review at specific checkpoints — before irreversible financial transactions, before external communications, before data that affects regulatory filings. What varies is the density of those checkpoints, not their existence.

The real question in most enterprise agent governance discussions is not "autonomous yes or no" — it is "at which specific points in this specific workflow does human review need to happen, and what exactly does that human review gate?" This framing is more tractable and leads to more useful governance design than the binary framing.

For every agent deployment — regardless of how confident your team is about the AI's capability — answer these four questions before production deployment. If you cannot answer them clearly, you are not ready to deploy.

Blast radius is the scope of harm from an agent error: financial exposure, data affected, regulatory consequences, customer impact, reputational risk. An agent that sends a summary email to an internal distribution list has a small blast radius. An agent that submits a GST filing, executes a bank transfer, or sends a communication to a regulator has a large one.

Blast radius determines the appropriate level of human oversight, the required testing depth before production deployment, and the rollback plan. High blast radius tasks require supervised agent deployment (Level 3) regardless of how capable the AI appears in testing.

Map the workflow and identify every action that cannot be undone: a transaction executed, a document submitted, an email sent to an external party, a record deleted. For each irreversible action, there must be an explicit human confirmation step before production deployment. This is not optional for high-stakes systems — it is a governance requirement.

The confirmation step does not need to be a detailed review. It can be a "confirm this before we proceed" notification with 60 seconds to review. But it must exist, and the agent must not be able to bypass it.

Every production agent deployment requires a complete audit log: what the agent accessed, what decisions it made, what actions it took, and when. Without this, you cannot investigate incidents, satisfy compliance requirements, or build the organisational confidence that comes from demonstrating that the agent's behaviour is observable and reviewable.

Audit requirements differ by sector. For Indian BFSI firms under RBI guidelines, AI agent audit logs may be subject to the same retention requirements as other transaction records. For healthcare under DPDP 2023, audit logs of AI interactions with personal data are part of the demonstrable safeguards requirement. Design the audit logging before deployment, not after.

For data entry tasks, rollback is a database operation. For document generation, rollback is deletion. For financial transactions, external communications, or regulatory filings, rollback is complex, costly, or impossible. The rollback plan determines how cautious the rest of your governance design needs to be: easy rollback permits more autonomous operation, difficult rollback requires more human oversight before action.

Based on blast radius and reversibility, enterprise tasks fall into clear categories:

Safe for full autonomy (with monitoring):

Appropriate for supervised autonomy (human checkpoint before high-stakes actions):

Human-in-the-loop required for every decision:

Indian enterprises deploying autonomous agents face regulatory considerations that do not apply in all markets:

Labour law: Automated HR decisions — attendance-based warnings, performance classification, shift allocation — touch labour law in ways that vary significantly by sector and state. Autonomous HR agents require legal review of the specific decisions they are making before deployment.

DPDP Act 2023: Automated processing of personal data requires demonstrable safeguards, including the ability to show what processing was done, on whose data, and why. Audit logs and explainability for personal-data-touching agents are not optional under this framework.

Sector regulators: RBI, SEBI, IRDAI, and NABARD each have guidance on AI use in regulated activities. BFSI enterprises deploying agents in credit decisioning, fraud detection, or customer communication should have their compliance team review the specific deployment against current regulatory guidance before go-live.

At Infurotech, we design autonomous agent deployments with the governance layer as a first-class deliverable, not an afterthought. The blast radius assessment, checkpoint design, audit logging, and rollback plan are part of the architecture document — alongside the agent's functional capabilities.

Our automation services deliver governed agent workflows that satisfy the four governance questions above. Our AI Builder service includes human-in-the-loop controls as standard for any agent that touches high-blast-radius operations. Our strategic consulting team works with enterprise governance and compliance stakeholders to design the policy layer that governs agent deployment at scale. For regulated industries, our industry solutions team brings sector-specific regulatory awareness to every agent deployment design.

The 97% of executives who deployed agents last year are not all satisfied. The 79% who reported adoption challenges are finding that capability is necessary but not sufficient — governance is the other half. Talk to our team about building the governance layer that makes your agent deployments sustainable.