AI Updates

Enterprise AI applications are quietly becoming expensive. Not because the models charge more, but because production apps send the same context — system prompts, user profiles, conversation history, document chunks — with every single request. You pay for those tokens every time. For a high-volume application making thousands of API calls per day, the repeated context can represent 60–80% of total token cost.

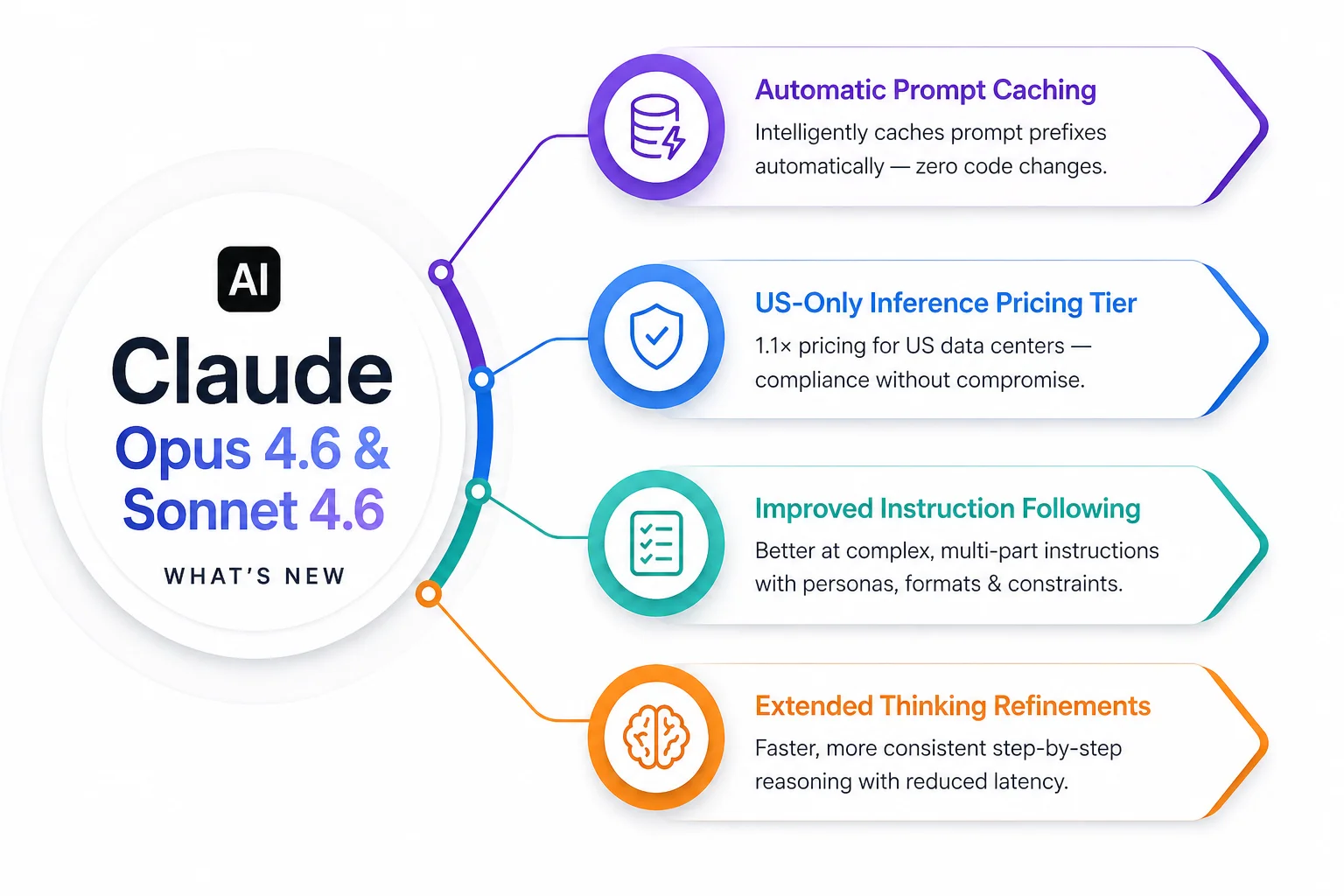

Claude Opus 4.6 and Sonnet 4.6, released in early February 2026, address this directly with a capability that requires zero code changes to benefit from: automatic prompt caching. It is the most commercially significant update in these releases — and it arrives alongside meaningful performance improvements that matter for teams running Claude in production.

The headlining features across both releases:

To understand why this matters, you need to understand how prompt caching works at a mechanics level.

Every Claude API call sends a sequence of tokens. The model processes all of them to generate a response. In a production enterprise application, a typical API call looks like this: a system prompt (500–2,000 tokens defining the AI's role, constraints, and context), optional retrieved documents from a RAG system (1,000–10,000 tokens), the user's message (50–300 tokens), and conversation history (variable).

The system prompt and retrieved documents are often identical or near-identical across thousands of requests per day. Without caching, you pay full price for those repeated tokens every single call. With prompt caching, the model stores a processed representation of the cacheable portion and reuses it across subsequent requests — reducing cost on cached tokens by approximately 90% and reducing latency because those tokens don't need to be reprocessed.

Previously, taking advantage of this required engineers to structure their prompts correctly and explicitly mark cache breakpoints in their API calls. This was a non-trivial engineering change, especially for teams who hadn't designed for it from the start. Automatic caching removes this requirement: the API analyses your prompts and handles caching decisions transparently.

The savings depend entirely on your application's prompt structure, but for a well-structured enterprise application, the numbers are significant.

| Application type | Typical repeated-context share | Estimated cost reduction |

|---|---|---|

| Customer support AI with fixed knowledge base | 70–80% | 60–70% reduction in token costs |

| Document analysis tool (same docs, many questions) | 85–95% | 75–85% reduction |

| Code review assistant with codebase context | 60–75% | 50–65% reduction |

| Conversational agent with long session history | 40–60% | 35–55% reduction |

| Single-turn API calls with minimal shared context | Under 30% | Minimal impact |

For Indian enterprises running AI applications at moderate to high volumes — 10,000 to 500,000 API calls per day — the cost reduction from automatic caching can translate to several lakhs per year in API costs. More importantly, it reduces response latency, which directly affects user experience quality in customer-facing applications.

A common question from enterprise teams is when to use which model tier. The post-4.6 decision framework is simpler than it used to be.

Claude Opus 4.6 is for your hardest problems: complex multi-step reasoning, nuanced analysis where accuracy is critical, agentic tasks that require planning across many steps, and use cases where the cost of a wrong answer is high. Pricing: $15 per million input tokens, $75 per million output tokens. Use for: financial modelling, legal contract review, complex code generation, strategic analysis.

Claude Sonnet 4.6 is the workhorse for production enterprise applications: high capability at lower cost, well-suited for most enterprise AI tasks including customer support, document extraction, content generation, and API integrations. Pricing: $3 per million input tokens, $15 per million output tokens. Use for: everything that doesn't require Opus-level reasoning.

Claude Haiku 4.5 is for high-volume, cost-sensitive workloads where latency and throughput matter more than maximum intelligence: real-time classification, quick summarisation, intent detection, routing. Pricing: $0.80 per million input tokens, $4 per million output tokens. Use for: preprocessing pipelines, classification at scale, live chat routing.

The rule of thumb: start with Sonnet 4.6 for any new application, use Haiku for high-volume preprocessing steps within that application, and escalate to Opus for specific complex reasoning tasks that Sonnet gets consistently wrong.

Migrating a production AI application to a newer model version is not always as simple as changing a model ID in a config file. Prompt engineering patterns that work well on one model version may produce subtly different results on the next. Testing matters.

The practical migration process:

For teams running multiple AI applications across different business units, model upgrades benefit from centralisation: a shared AI platform layer that manages model versions, handles caching configuration, and provides a rollback mechanism across all applications simultaneously.

Infurotech's technology services team has run model upgrade migrations across production enterprise AI deployments in BFSI, healthcare, and retail. If your organisation has multiple AI applications and needs a coordinated upgrade plan, we can design and execute that migration safely.

All applications built through Infurotech's AI Builder service are designed with model version management in mind — upgrades are handled as part of the platform lifecycle, not as emergency engineering projects. And every automation workflow we deliver runs on the current production model with tested upgrade paths.

The models are better. The caching is automatic. The cost savings are real. The question for your team is simply when you plan to take advantage of them — and whether you have the testing infrastructure to migrate safely. Talk to our team if you need help with either.