AI Updates

If you have been following enterprise AI developments in 2026, you have heard about MCP — the Model Context Protocol. You may have read that it has 100 million monthly downloads, that there are over 200 community-built servers, and that major AI platforms have adopted it as a standard. What you may not have read is a clear explanation of what it actually is, why it matters for enterprise architects specifically, and what the 2026 governance and security roadmap means for organisations building AI applications on top of it.

This article provides that explanation — without the hype, and with specific attention to the enterprise security and compliance requirements that matter for Indian organisations deploying AI in regulated environments.

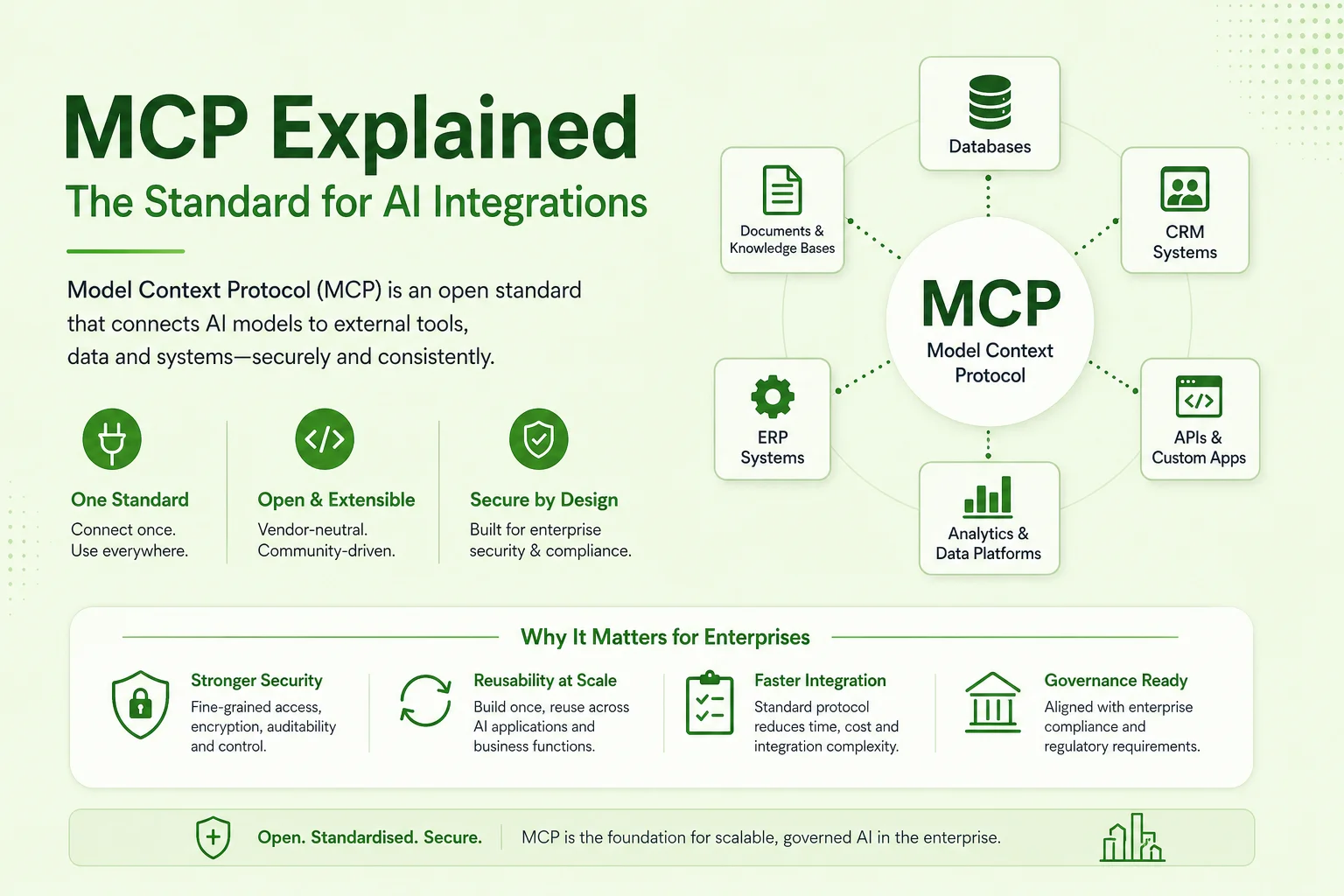

The Model Context Protocol is an open standard for connecting AI models to external tools, data sources, and systems. Think of it the way you think of USB: before USB, every device had a different connector. After USB, any device could connect to any computer with a single standard. MCP does the same thing for AI integrations.

Before MCP, connecting an AI agent to your ERP required custom integration code. Connecting it to your CRM required different custom code. Connecting it to your internal knowledge base, your ticketing system, your financial data feed — each required a separate, bespoke integration. The result was that every AI application had a unique, hard-to-maintain integration layer that could not be reused across applications.

With MCP, an AI agent can connect to any MCP-compatible server using the same protocol. An MCP server is a lightweight wrapper that exposes a system's capabilities — read this database, call this API, search this document repository — in a standardised way that any MCP-compatible AI model can understand and use. Write the MCP server once for your ERP, and every AI application in your organisation can use it.

This is why MCP adoption has been rapid. It solves a real, painful problem: AI integration complexity. And it does so with a protocol that is open, extensible, and not controlled by any single vendor.

Originally released by Anthropic in late 2024, MCP has since become an industry-wide standard. By April 2026, the protocol has 100 million monthly downloads across all distribution channels, over 200 publicly available community servers (integrations for GitHub, Slack, Salesforce, Google Drive, Postgres, and dozens of other systems), and adoption by Microsoft, Google, and AWS as a supported integration protocol in their AI platforms.

The community server library means that for many common enterprise systems, the MCP integration work has already been done. An organisation connecting Claude or another MCP-compatible AI to GitHub, Jira, Confluence, or Salesforce can use an existing community server rather than building from scratch. For proprietary or custom enterprise systems — internal ERP instances, legacy databases, custom APIs — the integration requires building a custom MCP server, but the protocol provides a clear specification for how to do it.

The scale of adoption is significant for a different reason: it has accelerated the protocol's maturation. Bug fixes, security improvements, and performance optimisations that would have taken years in a smaller ecosystem are happening in months because the user base is large enough to find and report edge cases quickly.

On April 8, 2026, the MCP maintainer team announced formal governance changes: a Contributor Ladder defining how community members can advance to maintainer roles, a Working Group delegation model for specific technical areas (security, transports, observability), and quarterly charter reviews that ensure the roadmap stays aligned with community needs.

For enterprise architects and CTOs, this matters for a specific reason: protocol stability. A protocol that is governed by an informal group of contributors has higher risk of fragmentation, breaking changes, and abandonment. A protocol with a formal governance structure — working groups, defined decision-making processes, regular reviews — has the characteristics of infrastructure that organisations can build on with confidence. The 2026 governance changes move MCP from "promising open-source project" to "enterprise-grade infrastructure standard."

The practical implication: organisations that were hesitating to make MCP a foundational part of their AI architecture due to governance uncertainty now have a clearer picture. The protocol is being maintained with the rigour appropriate to infrastructure that 100 million monthly users depend on.

The five features on the 2026 MCP roadmap address gaps that have been the primary obstacles to enterprise adoption at scale. Each one solves a specific problem that architects hit when moving from prototype to production deployment.

Current MCP deployments primarily use WebSocket transport — a persistent connection between the AI model and the MCP server. WebSocket transport works well for single-server deployments and low-concurrency scenarios. It does not work well for enterprise deployments that need to scale horizontally across multiple server instances behind a load balancer.

The HTTP transport implementation on the 2026 roadmap addresses this directly. HTTP is inherently stateless and horizontally scalable — the same request can be handled by any server instance in a cluster. For enterprise deployments where thousands of concurrent AI agent sessions need to access the same MCP server, HTTP transport is the prerequisite for production-scale operation.

Enterprise compliance and governance require that every action taken by an AI agent be logged: what data was accessed, what tools were called, what results were returned, and when. Current MCP implementations leave audit logging to individual server implementations — there is no standardised audit event format and no protocol-level requirement for what must be logged.

The audit trail feature on the 2026 roadmap standardises this at the protocol level. Every MCP interaction generates a structured audit event with a defined schema. This means audit logs from different MCP servers can be aggregated, queried, and analysed consistently — critical for DPDP 2023 compliance (which requires demonstrating what processing was done on personal data) and for the RBI model risk management requirements that govern AI in BFSI.

In current MCP implementations, authentication between the AI model and the MCP server is typically handled with API keys or server-level credentials. This means the AI agent authenticates as itself — with its own credentials — rather than as the user on whose behalf it is acting.

SSO integration changes this. With SSO, the MCP server authenticates the AI agent session using the end user's enterprise identity (via SAML or OIDC against the organisation's identity provider). The result is that the AI agent's access to enterprise systems is scoped to what the requesting user is authorised to access — not what the agent's API key is authorised to access. An AI agent acting on behalf of a junior analyst cannot access data that the junior analyst is not permitted to access, even if the agent itself has broader technical access.

This is the right security model for enterprise AI. Access control should follow the user, not the agent.

As MCP deployments grow, organisations need a layer that can apply routing, rate limiting, access control, and monitoring across multiple MCP servers — without each application needing to implement these controls independently. The gateway behaviour specification defines how an MCP gateway operates: routing requests to the appropriate server based on capability, enforcing per-user or per-application rate limits, logging all traffic for observability, and providing a single control plane for managing AI agent access to enterprise systems.

The gateway is the AI equivalent of an API gateway — infrastructure that belongs in the platform layer, not in individual applications. For Indian organisations deploying multiple AI applications across the enterprise, a well-designed MCP gateway layer is what prevents the AI integration landscape from becoming as fragmented as the pre-MCP state was.

Long-running AI agent workflows — an invoice processing agent that works through a queue over several hours, a compliance monitoring agent that runs nightly — need to maintain state across multiple MCP interactions. If the underlying MCP server instance is replaced (due to deployment, scaling event, or failure), the agent's session state must survive the transition.

Stateful session management defines how session state is persisted and recovered in a way that is transparent to the AI agent. This is the protocol-level equivalent of sticky sessions in web application load balancing — but designed specifically for the AI agent use case where the state includes tool call history, retrieved context, and intermediate reasoning.

Several of these features — HTTP transport, audit trails, SSO — are not yet in production release. The roadmap indicates they are coming, but the timing is not firm. This creates a practical question: should organisations wait, or start building on MCP now?

The answer is start building, but design for the roadmap. Specifically:

Our integration team designs and builds MCP server implementations for enterprise clients — connecting AI applications to ERP systems, internal databases, document repositories, and third-party APIs. Every implementation we build includes audit logging, secrets management, and a gateway design that accounts for the 2026 roadmap features.

Our AI infrastructure team helps enterprise architects design the full MCP layer — server implementations, gateway configuration, identity integration, and observability — as part of a broader AI platform design. For organisations deploying AI across multiple business functions, the MCP infrastructure layer is as important as the model selection decision.

Our AI Builder service uses MCP as the standard integration protocol for all enterprise system connections — every AI application we build for clients uses MCP to connect to enterprise data, which means every application benefits from the same server library and the same gateway layer. And our automation practice uses MCP-connected workflows for all enterprise automation deployments, providing the data access and tool connectivity that complex multi-step agents require.

MCP is infrastructure, and infrastructure decisions compound. The organisations that design their MCP layer thoughtfully now — with auditability, identity, and scalability in mind — will be in a substantially better position when the 2026 roadmap features ship. Talk to our team if you are designing your AI integration architecture and want a review before you build.