Data & Analytics

Every enterprise AI application eventually runs into the same wall: the model is capable, but it cannot see your data. It cannot query your database. It cannot read your internal documents. It cannot check the current state of your systems. Without that context, even the most capable AI is limited to reasoning about the world as it was when it was trained — not the world as it is in your business right now.

The traditional solution to this problem was custom integration code: a bespoke API wrapper for each data source, written specifically for each AI application, maintained as both the model and the data source evolve. Multiply this across five AI applications and ten data sources and you have fifty integration points, each requiring maintenance, each with its own authentication pattern, each potentially breaking whenever either end changes.

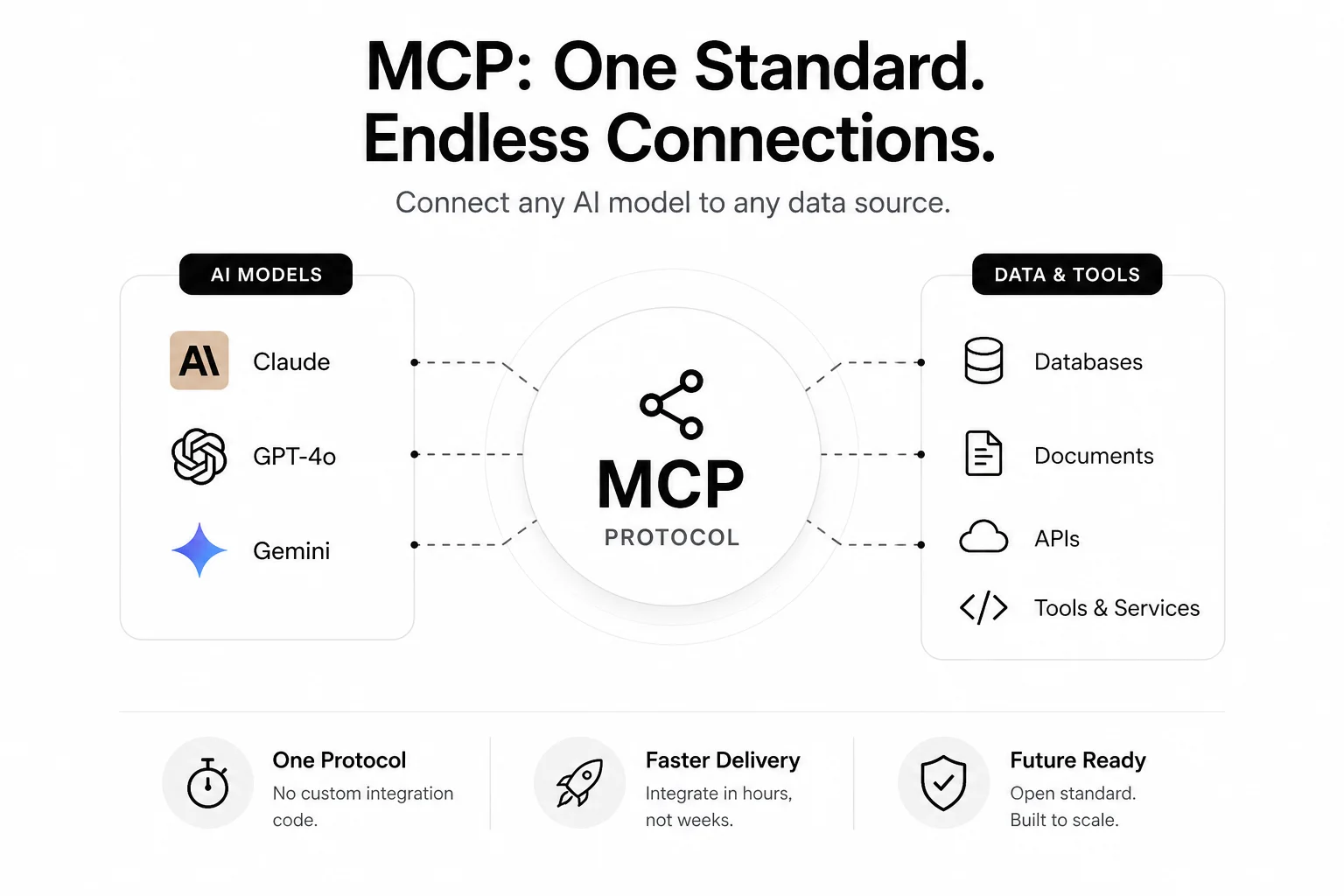

The Model Context Protocol (MCP) replaces this with a single standard. By February 2026, it had crossed 100 million monthly downloads. OpenAI and Google had both added native support. Over 200 community-built MCP servers now exist. What started as an Anthropic developer tool is rapidly becoming the infrastructure layer of enterprise AI.

MCP is a client-server protocol that standardises how AI models connect to external data sources and tools. Think of it as the USB standard for enterprise AI: before USB, every peripheral needed its own connector and driver. After USB, one standard made everything interoperable.

Before MCP: each AI application wrote its own integration code to connect to each data source. After MCP: any MCP-compatible AI client (Claude, GPT-4o, Gemini) can connect to any MCP-compatible data source or tool without custom integration code, using the same protocol.

The protocol defines three types of connection capabilities:

An MCP server exposes these capabilities for a specific system. An MCP client (the AI model) discovers what capabilities a server offers and uses them to complete tasks. The handshake, authentication, capability discovery, and data format are all standardised — so a new MCP client can connect to any existing MCP server, and a new MCP server is immediately available to all existing MCP clients.

Consider what connecting an AI to a new data source cost before MCP. An engineering team would need to:

For a simple integration, this is two to five days of engineering work. For a complex ERP or CRM integration, it can be two to four weeks. Multiply by the number of integrations your AI applications need, and you have a significant engineering backlog before you can build the AI features your business actually wants.

With a community-built MCP server for your data source, that work is pre-built. You configure authentication, point your AI at the MCP server, and the AI can immediately access that data source. The community has already written, tested, and documented the integration — you are consuming it, not building it.

The MCP ecosystem has grown significantly. The following categories of enterprise systems now have community-maintained MCP servers that production deployments are using:

| Category | Available MCP servers | Enterprise use case |

|---|---|---|

| Databases | PostgreSQL, MySQL, MongoDB, Supabase, Redis | AI reads and queries your operational data directly |

| Code and DevOps | GitHub, GitLab, Docker, Kubernetes, Terraform | AI acts on your code repositories and infrastructure |

| Project management | Jira, Linear, Asana, Notion, Confluence | AI reads and creates tickets, docs, and project updates |

| Communication | Slack, Microsoft Teams, Gmail | AI reads conversations and sends notifications |

| Finance and payments | Stripe, QuickBooks, Xero | AI queries financial data and triggers financial actions |

| Design and product | Figma, Miro | AI reads design files and creates design assets |

| Cloud infrastructure | AWS, GCP, Azure | AI monitors and manages cloud resources |

| CRM and sales | Salesforce, HubSpot | AI reads customer data and updates CRM records |

A financial analyst asks Claude: "Show me our top 10 customers by revenue this quarter and flag any who have reduced spending by more than 20% compared to last quarter." Without MCP, this requires a developer to write a specific query, run it, format the results, and paste them into a prompt. With the PostgreSQL MCP server configured, Claude executes this query directly, formats the results, and delivers the analysis — in a single conversational turn.

An engineering manager asks Claude to review their team's sprint backlog, identify tickets that have been in progress for more than five days without updates, and post a gentle reminder comment on each one tagged to the assignee. With the Jira MCP server, Claude reads the backlog, identifies the stale tickets, writes contextually appropriate comments, and posts them — a task that was previously 30 minutes of manual work per sprint.

A CFO preparing a monthly board report asks Claude to pull the previous month's transaction data from Stripe, calculate key metrics, identify anomalies, and draft the revenue section of the board report. With the Stripe MCP server, Claude fetches the data directly, does the analysis, and produces a draft — condensing three hours of financial analyst time into minutes.

MCP significantly increases the surface area through which an AI model can access and act on your systems. Enterprise deployments need to treat MCP servers with the same security rigor as any other privileged access path.

Key security principles for enterprise MCP deployment:

MCP is a core part of how we architect AI applications at Infurotech. Every application built through our AI Builder service uses MCP for enterprise system connections where a community server exists — avoiding custom integration code and leveraging the tested implementations the community has already built. For systems without an existing MCP server, we build one — once — and it becomes a reusable connection point for all future AI applications that need that system.

Our integration services team handles the full MCP deployment: server selection or development, authentication configuration, network security, and audit logging. Our automation services team connects MCP-enabled AI to your operational workflows. Our technology architecture team designs the MCP infrastructure to ensure it is maintainable as your AI application portfolio grows.

If your team is building or planning AI applications that need to connect to enterprise systems, understanding MCP is foundational. The 200+ available servers mean that for most common enterprise systems, the integration work is already done — you just need to deploy and configure it. Talk to our team about how MCP fits into your AI infrastructure strategy.