AI Consulting

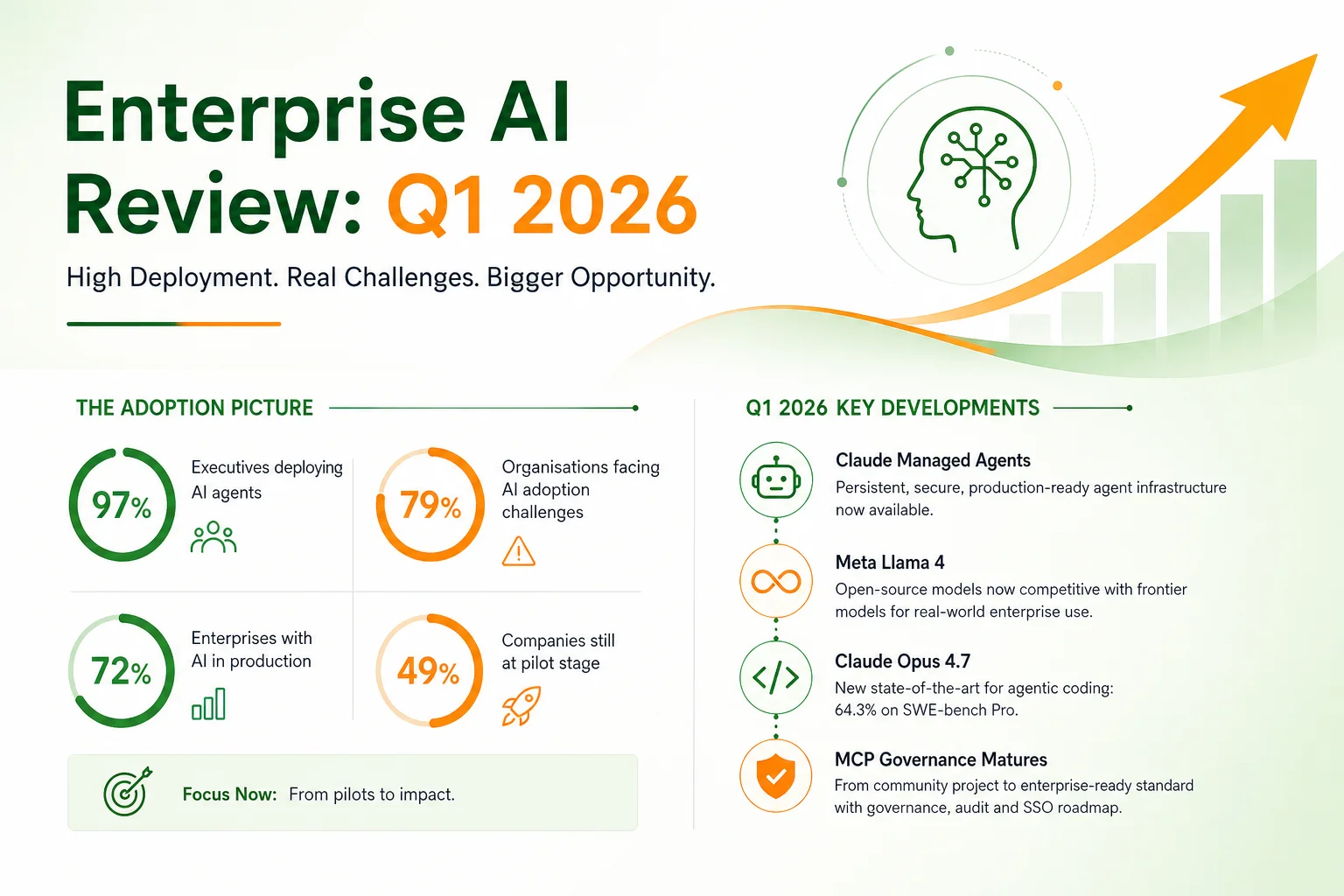

Q1 2026 ended with a data point that captures the state of enterprise AI better than any single technology announcement: 97% of executives reported deploying AI agents in the past twelve months, while 79% of organisations reported facing significant AI adoption challenges in the same period. The deployment rate and the challenge rate are both at historic highs, simultaneously. That is not a contradiction — it is the defining characteristic of enterprise AI in 2026. Deploying AI is easy. Making it work is not.

This review covers what changed in Q1 2026, where Indian enterprises stand relative to global peers, and what the next 90 days need to look like for organisations that are serious about closing the adoption gap.

The quarter produced a denser release schedule than any previous period in enterprise AI history. The changes that matter most for enterprise strategy — not for developer news cycles — are the following.

Claude Managed Agents went to public beta. Anthropic launched a fully managed agent harness: persistent state across multi-hour workflows, secure sandboxing, scoped permissions, and credential management without hardcoded secrets. This removes the primary infrastructure barrier to enterprise agent deployment. The build vs. buy calculation for organisations wanting to run persistent AI agents shifted substantially in Q1.

Meta's Llama 4 made open-source competitive with frontier models for a meaningful category of tasks. Llama 4 Maverick matches GPT-4o on broad benchmarks. Llama 4 Scout has a 10 million token context window and runs on a single H100 GPU. For Indian enterprises with data residency requirements or high-volume cost constraints, open-weight models are now a legitimate part of the model selection conversation rather than a compromise choice.

Claude Opus 4.7 set a new ceiling for agentic coding. 64.3% on SWE-bench Pro means that nearly two-thirds of real GitHub software engineering issues are resolved correctly by the model without human intervention. For software teams, this changes what AI-assisted development means: it is not autocomplete at scale, it is autonomous bug resolution for a majority of issues.

MCP governance matured. The Model Context Protocol — the standard for connecting AI agents to enterprise systems — moved from community project to formally governed infrastructure with a defined contributor ladder, working groups, and a roadmap that addresses enterprise-critical gaps: audit trails, SSO integration, and HTTP transport for horizontal scaling. Organisations that were waiting for MCP governance clarity before committing to it as infrastructure now have that clarity.

The numbers that matter for enterprise strategy are not the release announcements — they are the adoption and outcome data.

72% of global enterprises now have AI in production, up from 55% in early 2024. That is a 17-point increase in roughly eighteen months. The majority of these production deployments are in the Level 1–2 range on the autonomy spectrum: chatbots, copilots, and AI-assisted generation. Full autonomous agents in production remain a minority of deployments.

97% of executives reported deploying AI agents — but only 52% of employees report actively using AI tools in their daily work. The deployment-to-adoption gap is the core enterprise AI challenge of 2026. Organisations are deploying tools faster than they are embedding those tools into actual workflows, and the tools that are not embedded in workflows do not produce ROI regardless of their technical capability.

79% of organisations reported AI adoption challenges — the highest year-over-year increase since tracking began. The challenges are consistent across geographies: integration with existing systems (cited by 58% of respondents), data quality and governance (54%), change management (51%), and the absence of clear success metrics (47%). These are not technology problems. They are organisational and operational problems that technology alone cannot solve.

49% of companies are still at the pilot stage despite more than two years of active AI investment. These are organisations that have run proof-of-concepts, built internal capabilities, and deployed limited trials — but have not moved a meaningful AI application to production scale. The pilot stage is where organisations learn; it is not where they generate returns. Remaining in pilot indefinitely is the most expensive AI strategy there is.

Indian enterprise AI adoption has distinctive characteristics relative to global peers — some advantageous, some not.

Where Indian enterprises lead: cost-sensitivity drives ROI discipline. Indian enterprises adopting AI are generally more focused on measurable cost reduction than their Western counterparts, which produces more disciplined use-case selection. The large captive technology talent pool in Indian conglomerates means that technical implementation capability is less of a constraint than it is for comparable-size companies in other markets. And Indian enterprises have navigated complex, frequently-changing regulatory environments for decades — the compliance dimension of AI governance is not unfamiliar territory.

Where Indian enterprises lag: governance frameworks for AI are underdeveloped relative to deployment pace. Organisations are deploying agents faster than they are establishing the audit trails, human-in-the-loop checkpoints, and model risk management processes that responsible deployment requires. The multi-vendor AI strategy is also underdeveloped — most Indian enterprises are effectively single-vendor in their AI platform choices, which creates concentration risk in a market that is moving fast enough that today's leading model may not be the right choice twelve months from now. Change management investment is consistently under-budgeted relative to technology investment.

Across the organisations that made measurable progress in Q1 — moved from pilot to production, demonstrated quantifiable ROI, scaled adoption beyond the initial team — three characteristics are consistent.

They started with a specific problem, not an AI strategy. "We will use AI to improve operations" is not a strategy — it is a direction. The organisations that moved to production defined a specific workflow, a specific team, and a specific success metric before deploying anything. The goal was not to deploy AI; the goal was to reduce invoice processing time from 5 days to 1 day, or to increase first-contact resolution on customer support tickets from 35% to 60%. When the goal is specific, the technology selection and deployment design follow naturally. When the goal is "adopt AI," every decision is open-ended and nothing ships.

They built governance before they built scale. The organisations that hit adoption challenges in Q1 — the 79% — disproportionately tried to deploy at scale before establishing the governance layer: audit trails, human-in-the-loop checkpoints, rollback procedures, access controls. When something went wrong (and in autonomous agent deployments, something always eventually goes wrong), they had no recovery procedure and no audit trail to diagnose the problem. The organisations that succeeded built the governance layer first, which made the scale-up straightforward rather than a crisis.

They used AI builders for speed and consultants for depth, not one or the other. The two-track approach — using AI builder tools (Claude Code, Supabase, Vercel, and their equivalents) for rapid prototyping and initial production deployment, while bringing in specialist expertise for integration hardening, governance design, and change management — consistently outperformed both the "build everything slowly" approach and the "buy a platform and hope for the best" approach. Speed and depth are not in conflict when you match the tool to the task.

August 2026 is the first significant enforcement milestone for the EU AI Act — the date when requirements for high-risk AI systems become enforceable for organisations operating in or exporting to the European Union. Indian companies with EU customers, EU operations, or EU regulatory relationships need to be aware of what this means.

High-risk AI systems under the EU AI Act include AI used in employment decisions, credit scoring, biometric identification, and critical infrastructure — categories that encompass a significant range of enterprise AI deployments. The requirements are not trivial: conformity assessments, technical documentation, human oversight mechanisms, post-market monitoring, and registration in the EU database of high-risk AI systems.

For Indian IT services companies with EU clients whose products incorporate AI: the AI Act applies to the product, not just the organisation that deploys it. If an Indian IT firm builds an AI-powered loan decisioning tool for a European bank, the Act's requirements apply to that tool. The documentation and governance work for August 2026 compliance needs to start now, not in July.

The 90-day window from May through July is the most consequential for Indian enterprises that are still in the gap between pilot and production. Four priorities matter most.

Take one workflow from pilot to production. Pick the AI application that has already demonstrated the best pilot results and make the investments — integration hardening, user training, support processes, monitoring — needed to run it in production at scale. The learning from one production deployment is worth more than five additional pilots.

Implement basic agent governance. For every AI agent that takes action on your behalf — submits documents, sends communications, enters data into systems of record — establish the minimum governance layer: audit logging of every action, a human review checkpoint before irreversible actions, and a documented rollback procedure. This does not require a governance programme or a policy document. It requires engineering discipline applied to specific deployment decisions.

Build a multi-model strategy. Evaluate whether your current AI deployments are appropriately matched to the model capabilities available. High-volume, single-turn tasks are candidates for cost-efficient models (Llama 4, Haiku). Complex reasoning and multi-step agentic tasks are where frontier models (Opus 4.7, Gemini Ultra) pay for themselves. Being locked into a single model for everything is both more expensive and more fragile than a deliberate multi-model approach.

Start EU AI Act documentation if you have EU exposure. The documentation for high-risk AI systems takes 3–6 months to prepare correctly. Starting in Q2 for August enforcement is tight but achievable. Starting in July is not.

Our strategic consulting team works with Indian enterprises at the use-case selection and governance design stage — before deployment decisions are made, when the choices still have the most leverage. A focused Q2 AI sprint — use-case selection, governance framework, and first production deployment scoped and planned — takes four to six weeks and gives organisations a clear path from the pilot trap to production.

Our AI Builder service uses the two-track approach described above: rapid prototyping using Claude Code and modern tooling, followed by production hardening and integration. The typical timeline from requirements to first production deployment is two to four weeks for well-scoped internal tools. Our automation practice takes existing workflows — invoice processing, onboarding, reporting, compliance monitoring — and builds the production automation that the 79% of organisations facing adoption challenges have not yet achieved.

For regulated industries, our industry solutions team brings sector-specific regulatory awareness to every deployment: RBI and SEBI requirements for BFSI, DPDP Act 2023 for any personal-data processing, and EU AI Act requirements for organisations with European exposure.

Quick wins in Q2 include ERP workflow automation for finance teams stuck in month-end close cycles, and recruitment automation that cuts time-to-hire for high-volume hiring programmes.

Q1 2026 demonstrated conclusively that the technology is not the constraint. The organisations that made progress had the same models and tools available to them as the ones that didn't. The difference was discipline in use-case selection, investment in governance, and the organisational will to move from pilot to production. Those are choices, not capabilities. Talk to our team about what your Q2 AI sprint should look like.